How it works

From policy to evidence.

Every session follows the same operational loop: policy defines expected behavior → scenario tests the policy → team executes → evidence is produced.

Step 1

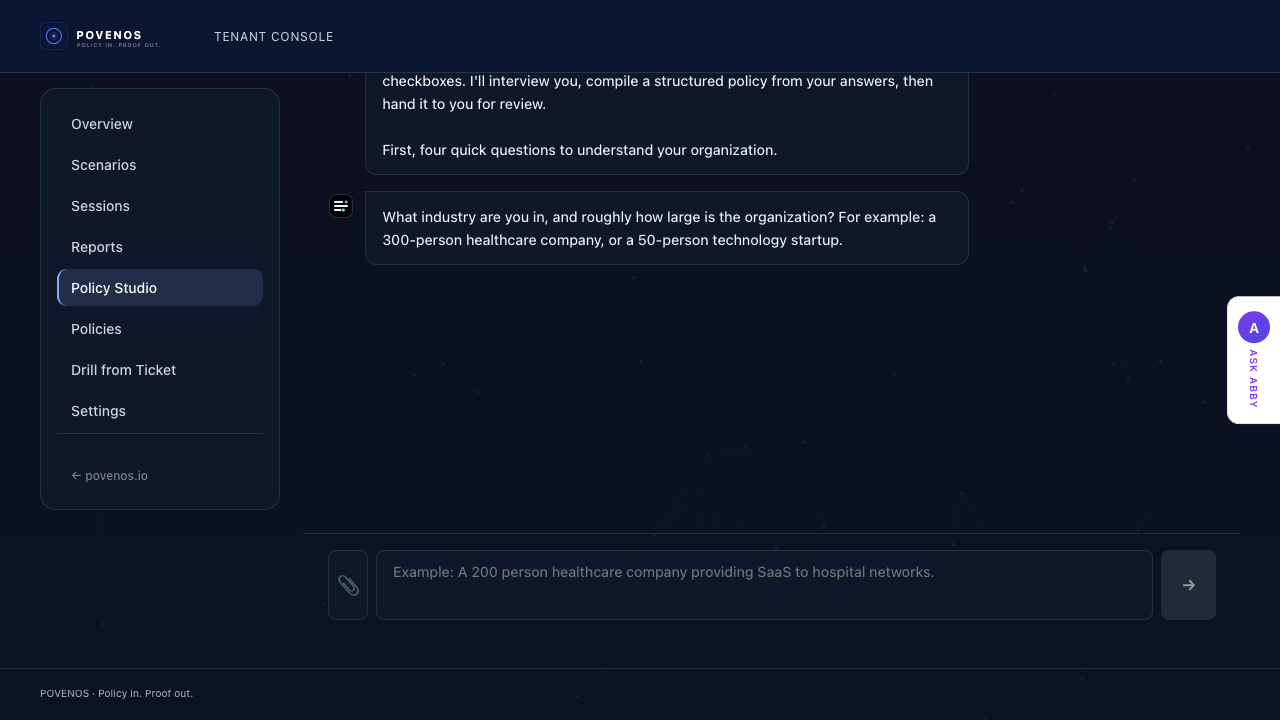

Define the procedure.

Define how operational disruptions should be handled. Who owns the response. How escalation works.

Abby analyzes governance documents, identifies gaps, asks targeted questions, and generates enterprise-grade policy drafts. She maps policies to frameworks and converts them into simulation rules.

Policies can also be built directly inside the studio. Either way, the result is a procedure ready to validate.

Step 2

Launch a scenario.

Choose a scenario from the library, upload a ticket from your ITSM platform, or let the platform generate one.

Scenarios introduce ransomware outbreaks, production outages, data breaches, vendor compromises, and infrastructure failures. They reflect real operational pressure: incomplete information, conflicting signals, and time pressure.

Upload real tickets from ConnectWise, PagerDuty, ServiceNow, or any ITSM platform to convert them into training simulations.

Step 3

Team executes in real time.

Participants respond from their operational role (incident commander, engineer, or operations lead), mirroring how disruptions unfold in real organizations.

The platform observes without guiding. Participants are not prompted toward correct answers—Povenos measures actual behavior.

Teams escalate, communicate, and make calls with incomplete information, exactly the conditions they face during real disruptions.

Adaptive Pressure

Pressure escalates with the scenario.

Sessions adapt dynamically. Signals arrive in waves, conditions change, and Abby adjusts coaching based on the operator's decisions. The platform creates real urgency without requiring external coordination.

Step 4

The system records execution.

Every action, decision, and delay is timestamped. The system records acknowledgement, escalation timing, policy steps followed or skipped, and coordination failures.

Step 5

Evidence is produced.

After the session the platform produces a structured evidence report showing how the response actually unfolded.

Every session reveals gaps: steps skipped, escalations delayed, decisions unclear. These show where written procedure and real execution diverge. The report shows overall performance, execution timeline, which policy steps were followed or skipped, and how this run compares to previous ones.

Leaders can review the timeline. Teams can replay the scenario and see exactly where execution broke down.

The loop repeats.

Operational readiness is not a one-time event. Organizations can run sessions repeatedly as procedures evolve. Over time sessions create an operational memory showing how response patterns evolve and where recurring gaps appear.

Operational response procedures are written during calm moments.

Their effectiveness is revealed during chaotic ones. Povenos allows organizations to discover the difference.